This article is more than 1 year old

HPE GreenLake: The HPC cloud that comes to you

Imagine having high-performance computing with cloud-like pricing and scaling. Well, imagine no more

Sponsored By its very nature, high performance computing is an expensive proposition compared to other kinds of computing. Scale and speed cost money, and that is never going to change. But that doesn’t mean that you have to pay for HPC all at once, or even own it at all.

And it doesn’t necessarily mean that you need to pay for a bunch of cluster experts to manage a complex system, either, which can be a significant part of the overall cost of an HPC system and which is often a lot more difficult to find than getting an HPC system through the budgeting process.

Traditionally, organizations have signed leasing or financing agreements to cushion the blow of a big capital outlay required to build an HPC cluster, which includes servers (usually some with GPU acceleration these days), high speed and capacious storage, and fast networking to link it all together.

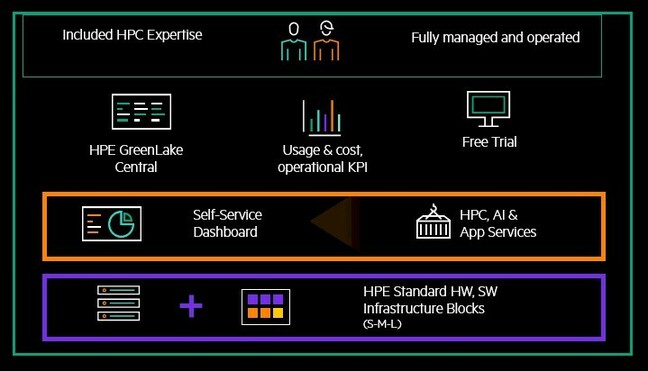

However, the same kind of pay per use, self-service, scalability, and simplified IT operations that comes with the cloud is, thankfully, available on-premises for HPC systems though HPE GreenLake for HPC offering, which previewed in December 2020 and which will be in selected availability in June, with general availability coming shortly thereafter.

There is much more to HPE GreenLake than a superior cloud-like pricing scheme. But after getting a preview of the HPE GreenLake for HPC, we boiled it all down to this: HPE GreenLake is like having a local cloud on your premises for running IT infrastructure – that is owned by HPE, managed by HPE’s substantial experts and a lot of automation it has developed, that is used by you. In this case we are focusing on traditional HPC simulation and modeling, machine learning and other forms of AI, and data analytics that are commonly called high performance computing these days.

Ahead of the HPE Discover conference at the end of June 2021, Don Randall, worldwide marketing manager of the HPE GreenLake ‘as-a-service’ offerings, gave us a preview of what the full HPE GreenLake for HPC service will look like and some hints about how it will be improved over time.

HPE has sold products under the HPE GreenLake as-a-service model for a dozen years now, and it has some substantial customers in the HPC arena using earlier versions of the service, including Italian energy company Ente Nazionale Idrocarburi (ENI) and German industrial manufacturer Siemens, which has HPE GreenLake for HPC systems in use in 20 different locations around the globe.

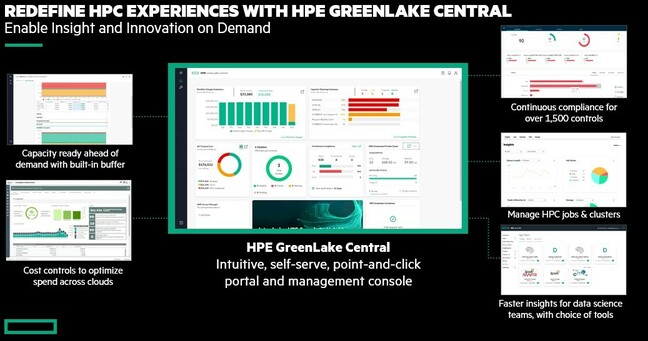

With the update this year, HPE is adding the GreenLake Central master console on top of its as-a-service offering, and is integrating telemetry from on-prem clusters, and usage and cost data from public clouds such as Amazon Web Services and Microsoft Azure that will allow GreenLake shops to see the totality of their on-premises GreenLake infrastructure alongside the public cloud capacity they use. This is all cloudy infrastructure, after all, and as Randall explains, HPE absolutely expects and wants for customers to use the public clouds “opportunistically” when it is appropriate.

The earlier versions of HPE GreenLake for HPC lacked the automated firmware and software patching capabilities that HPE is rolling out this year, and the fit and finish has improved considerably, too, according to Randall. And there are plans to add more features and functions to GreenLake for HPC in the coming months and years, some of which HPE is willing to hint about now.

HPE GreenLake has been evolving over the years, and adding features to expand support for HPC customers, for good reason. Despite a decade and a half of cloud computing, 83 percent of HPC implementations are outside of the public cloud, according to Hyperion Research. And they are staying on-premises for good, sound reasons. HPC is, by definition, not the general-purpose computing, networking, and storage that is typically deployed in an enterprise datacenter. Compute is often denser and hotter, networks are heftier, and storage is bigger and faster; the scale is generally larger than what is seen for other kinds of systems in the enterprise.

And moving to the public cloud presents its own issues, including latency issues between users and the closest public cloud regions, the size of datasets creates its own gravity that makes it very hard – and expensive – to move data off the public cloud once it has been placed there. And then there is the issue of application entanglement. In some cases, applications are so intertwined that they can’t be moved piecemeal to the cloud, so you end up in an all-or-nothing situation, and moreover, for latency reasons, HPC applications want to be near to HPC data. So you can’t break it apart that way, either, with data in the cloud and apps on premises, or vice versa, without paying some latency and cost penalties.

HPE GreenLake for HPC is meant to solve all of these issues, and more.

“We have got a ton of things that that are putting us way out in the lead,” says Randall. “We have the expertise to design, integrate, and deliver HPC setups globally, and we are number one in HPC, and we have people who are really, really sharp. HPE has invented a lot of HPC technology or acquired it, and we have a services model that is we have been refining and is well ahead of what other IT companies and public clouds are doing.” That services model is a key differentiator for HPE GreenLake for HPC, according to Randall. HPE puts more iron on the floor for an HPC system than the customer is using, so this excess capacity is ready to use when it is needed.

Self-service for the provisioning of compute, storage, and networks is done through the HPE GreenLake Central console, and the entire HPC stack clusters, operating software and so on, is managed by HPE experts from one of a dozen centers around the world. customers operate the clusters with self-service capabilities in HPE Greenlake Central to manage queues, jobs, and output. HPE GreenLake for HPC gets HPC centers out if the business of maintaining the hardware and software of those clusters, and while HPE is not offering application management services, it does have widget with self-service capabilities that will snap into the GreenLake Central console and it will entertain managing the HPC applications themselves under a separate contract if customers really want this.

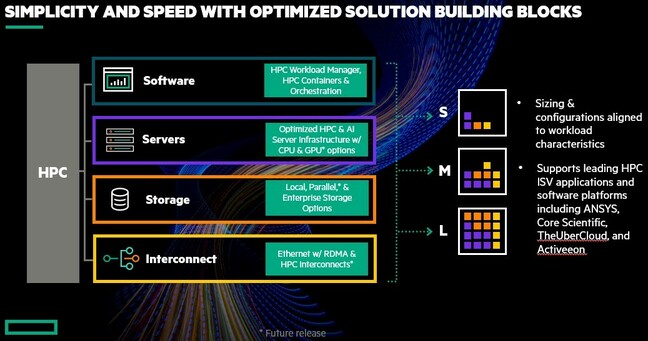

The initial GreenLake for HPC stack was built on the Singularity HPC-specific Kubernetes platform, and over time it may evolve to use the HPE Ezmeral Kubernetes container platform. Initially, HPE GreenLake for HPC included HPE’s Apollo servers and storage, plus standard storage and Aruba interconnects, but now includes Lustre parallel file systems, a homegrown HPE cluster manager, and the industry standard SLURM job scheduler as well as Ethernet networks with RDMA acceleration. The HPE Slingshot variant of Ethernet tuned for HPC and IBM’s Spectrum Scale (formerly known as General Parallel File System, or GPFS) parallel storage will be added in the future. HPE Cray EX compute systems will also be available under the HPE GreenLake for HPC offering, as will other parallel file systems that are up and coming in the HPC arena.

HPE started out with a focus on clusters to run computer aided engineering applications (with a heavy emphasis on ANSYS), but is expanding its HPE GreenLake cluster designs so they are tuned for financial services, molecular dynamics, electronic design automation, and computational fluid dynamics workloads, and it has an eye on peddling GreenLake for HPC to customers doing seismic analysis, weather forecasting, and financial services risk management. The scale of the machines offered under GreenLake for HPC will be growing, too, and Randall says that HPE will absolutely sell exascale-class HPC systems to customers under the GreenLake model.

We have a feeling that the vast majority of exascale-class systems could end up being sold this way, given the benefits of the HPE GreenLake approach. Imagine if all of the firmware in the systems was updated automagically, and ditto for the entire HPC software stack? Imagine proactive maintenance and replacement of parts before they fail.

Imagine not trying to hire technical staff to design, build, and maintain a cluster, and getting the kind of cloud experience that people have come to expect without having to go all-in on one of the public clouds and make do with whatever compute, storage, and networking they have to offer – which may or may not be what you need in your HPC system for your specific HPC workloads. Imagine keeping the experience of an on-premises cluster, but having variable capacity inherent in the system that you can turn on and off with the click of a mouse? This is what HPE GreenLake for HPC can do, and it is going to change the way that companies consume HPC.

Sponsored by HPE