This article is more than 1 year old

If data center NetOps and DevOps are going to play nice, they need a digital sandbox

Nokia’s digital twin emulates your network and test environment using a fabric of containers

Sponsored It’s easy to spot data center operators, says Jonathon Lundstrom: “They’re the folks with the frazzled hair.”

After all, says Lundstrom, who is director of business development for Nokia’s webscale organization, “They're the ones making sure that all the lights stay on, that traffic keeps flowing, and they're the ones who are the most concerned about risk management.” For data center network operators, stability is everything. But designing network and data center infrastructure for stability from the outset is just the first challenge. Maintaining that stability in day-to-day operations is another. And then there’s the challenge of troubleshooting and remediating when something, inevitably, goes wrong, ideally without making things worse in the process.

An additional complication for infrastructure teams over the last ten years has been the revolution in applications development wrought by DevOps, and how this affects the way organizations manage compute, storage and – to a lesser extent so far – network infrastructure.

“The DevOps methodologies presuppose that the network is flexible and can change rapidly, that it's easily consumable,” Lundstrom explains. “But unfortunately, they leave it to the network operations organization to manage all that risk.” Part of the answer is for network teams to follow the dev team’s lead and rely on more automation. But in turn, they must be confident that these automations are consistent, and that they maintain the integrity of the network, uptime, and mean time to repair.

This leaves network teams in a bind. How can they deliver the agility and automation modern enterprises need, while continuing to deliver stability, while recovering quickly when problems do occur?

The trite answer is more resources for exhaustive testing and validation. But as Lundstrom explains, the traditional approach to testing and validating infrastructure configuration is to set up in physical labs – effectively microcosms of the live network. But this presents its own challenges. It is resource intensive, in terms of buying the equipment, and also in time spent maintaining the environments. And that’s even before you factor in the time that staff spend building network designs, validating functionality, and troubleshooting issues in these systems.

Also, whatever the initial configuration of the lab network, the risk is that it will drift from the configuration of the live network over time, increasing the risk of new problems arising, and making solving them even more difficult.

Meet your digital twin

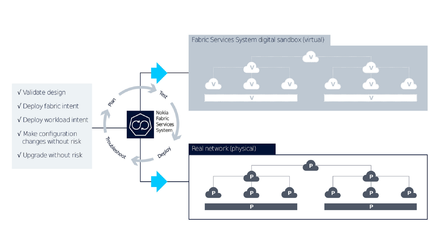

Nokia aims to resolve these issues with the Fabric Services System, which is part of its Data Center Switching Fabric solution, along with its Service Router Linux (SR Linux) NOS and Nokia’s own switching hardware platforms. Included within the Fabric Services System is a digital sandbox, which is a containerized environment that can produce a digital twin of the planned or live physical network.

As Lundstrom explains it, the digital sandbox is “functionally embedded in the Fabric Services System and spawns containerized SR Linux instances on demand. It creates the virtual plumbing for a newly designed network, or an emulated replica of the live environment.”

The code running in the sandbox is the same as that running on the switches in the physical environment. This gives NetOps teams the ability to replicate – and test – multiple different scenarios, including new configurations and applications to evaluate how they will perform prior to full deployment. The time savings this provides are measured in days and weeks, says Lundstrom . Lundstrom elaborates on the sandbox “The configuration is identical, and we can not only simulate but actually emulate both the control plane and the data plane of the network so that you can see exactly what the expected and real behaviours are going to be in that digital sandbox before you get to production.” This capability allows NetOps teams to gain confidence, and know what to expect, thus reducing the level of risk when making configuration changes in the production network.

Nokia’s entire Fabric Services approach is designed to be open – customers can choose from a range of Nokia hardware platforms today, but SR Linux is architected to support white-label platforms and could do so in the future. Customers can also choose the protocols they want to leverage, use the tools of their choice, or even develop their own network applications, which SR Linux will manage and make available to all users similar to the way it manages its own applications. This philosophy is, necessarily replicated with the digital sandbox.

“If customers have physical devices from other vendors, we can also connect those into the sandbox,” says Lundstrom. “With a little bit of plumbing between the server that the sandbox is running on, and the physical devices in their lab environments, live interop can be validated and tested as well. Additionally, external sources of information like BGP peers, route reflectors, or traffic generators can also be connected to the sandbox.”

“SR Linux devices” in the sandbox can also be fully managed by any other device as well, Lundstrom explains. “So, scripting tools and Ansible can leverage the API of the sandbox generated nodes. It's like building a dev environment for your orchestration team, right there, in a containerized, virtualized environment … for us it is a complement to the existing orchestration test environments that a customer has. The sandbox just gives NetOps teams an easy place to go and play.”

As part of testing and validation for new networks, network teams want to know what to expect when something goes wrong, but with traditional approaches, even subtle differences between lab and live configurations can throw up different behaviours. By having an exact digital twin, he says, “Even things like upgrades and failure testing can be predictable in both environments.” The rollout of the digital sandbox is accompanied by “Nokia Certified Designs” says Lundstrom: “Those are embedded best practices for the creation of data center network infrastructure, as well as overlay services in that data center.”

This is all designed to feed the desire of operations people for consistency. Every per node config change that's different than the “golden” config represents a risk, he says. “I could be implementing something on that node that affects not only the customer that I'm trying to fix, or I'm trying to configure, but also other customers.” And the impact of these may only become apparent later when something goes wrong . To reduce the possibility of this, the Fabric Services System constantly monitors when and where changes happen, and monitors variations from the intents of the overlay or underlay services on each node.

It’s about consistency. Again

Although there is a clear benefit for initial network design or for the testing of proposed config changes, the sandbox should also give operators breathing space when it comes to Day 2+ management and live troubleshooting. “There's always flashing red lights, the key is to sort the important ones from those that are not,” says Lundstrom. But by instantly firing up a digital twin of the live network, “You have a safe place to go and investigate without the potential of causing additional problems.”

Once a problem has been identified, he continues, the root cause can be isolated, and fixes tested, in the digital sandbox, again without the potential of isolating other customers. The Fabric Services System automates the introduction of fixes to the production environment in a way that is similar to continuous development principles, and the digital sandbox makes post deployment validation easier as it is a working reference of the expected behaviour.

While the idea of containerizing a fabric of network operating systems might not be entirely unique to Nokia, Lundstrom says, “What other people aren't doing is the orchestration of the emulation environment, the ability to basically push a button and twin your physical network into a virtualized environment and have it automatically set up the same way, with the same production configuration. The same connections, the same control plane and scaled down data plane.”

Today, the sandbox can emulate a network of 500 nodes, which could easily represent a data center containing 20,000 servers. How far customers scale their own sandbox will depend on the amount of compute they make available. “But the amount of physical gear that we would be replacing with this virtualized environment could be staggering,” says Lundstrom. “And that's both a CapEx savings for the operator, as well as just the operational time of having to go and do all that reconfiguration or new feature validation or troubleshooting exercise.”

But while saving money on capex is one thing, he says, the time savings from not having to physically build testing, configuration and validation setups are potentially far more significant. “We're talking about going from days and weeks … to, minutes and hours,” he says.

Using the Fabric Services System to provide automation and its digital sandbox to provide an emulated environment, NetOps teams can decrease risks and improve the metrics they are judged by – such as uptime, mean time to repair and performance. And they can keep pace with the growing needs of their DevOps colleagues for speed and flexibility.

No doubt NetOps teams can use the time saved to do even more exhaustive testing, as well as tackling new strategic challenges, working more closely with their DevOps colleagues, and optimizing the network for new workloads. And if they’re really lucky, they might be able to take some time out to finally fix that frazzled hair.

Sponsored by Nokia.