This article is more than 1 year old

Put data first when deploying scale-out file storage for accelerated systems

'When storage hardware is added as a quick fix without a well thought out strategy, problems will often arise'

Sponsored It is easy to spend a lot of time thinking about the compute and interconnect in any kind of high performance computing workload – and hard not to spend just as much time thinking about the storage supporting that workload. It is particularly important to think about the type and volume of the data that will feed into these applications because this, more than any other factor, will determine the success or failure of that workload in meeting the needs of the organization.

It is in vogue these days to have a “cloud first” mentality when it comes to IT infrastructure, but what organizations really need is a “data first” attitude and then realize that cloud is just a deployment model with a pricing scheme and – perhaps – a deeper pool of resources than many organizations are accustomed to. But those deep pools come at a cost. It is fairly cheap to move data into clouds or generate it there and keep it there; however, it can be exorbitantly expensive to move data from a cloud so it can be used elsewhere.

The new classes of HPC applications, such as machine learning training and data analytics running at scale, tend to feed on or create large datasets, so it is important to have this data first attitude as the system is being architected. The one thing you don’t want to do is find out somewhere between proof of concept and production that you have the wrong storage – or worse yet, find out that your storage can’t keep up with the data as a new workload rolls into production and is a wild success.

“When storage hardware is added as a quick fix without a well thought out strategy around current and future requirements, problems will often arise,” Brian Henderson, director of unstructured data storage product marketing at Dell Technologies, says. “Organizations buy some servers, attach some storage, launch the project, and see how it goes. This type of approach very often leads to problems of scale, problems of performance, problems of sharing the data. What these organizations need is a flexible scale-out file storage solution that enables them to contain all of their disparate data and connect all of it so stakeholders and applications can all quickly and easily access and share it.”

So, it is important to consider some key data storage requirements before the compute and networking components are set in stone in a purchase order.

The first thing to consider is scale, and you should assume scale from the get-go and then find a system that can start small but grow large enough to contain the data and serve disparate systems and data types.

Although it is probably possible to rely on internal storage or a hodgepodge of storage attached to systems or clusters, HPC and AI workloads more often than not are accelerated by GPUs from NVIDIA. It is best to assume that compute, storage, and networking will have to scale as workloads and datasets grow and proliferate. There are many different growth vectors to consider and forgetting any of them can lead to capacity and performance issues down the road.

And there is an even more subtle element to this storage scale issue that should be considered. Data is archived for both HPC and AI systems. HPC applications take small amounts of initial conditions and create a massive simulation and visualization that reveals something about the real world, while AI systems take massive amounts of information – usually a mix of structured and unstructured data – and distill it into a model that can be used to analyze the real world or react to it. These initial datasets and their models must be preserved for business reasons as well as data governance and regulatory compliance.

You can’t throw this data away even if you want to

“You can’t throw this data away even if you want to,” says Thomas Henson, who is global business development manager for AI and analytics for the Unstructured Data Solutions team at Dell Technologies. “No matter what the vertical industry – automotive, healthcare, transportation, financial services – you might find a defect in the algorithms and litigation is an issue. You will have to show the data that was fed into algorithms that produced the defective result or prove that it didn’t. To a certain extent, the value of that algorithm is the data that was fed into it. And that is just one small example.”

So for hybrid CPU-GPU systems, it is probably best to assume that local storage on the machines will not suffice, and that external storage capable of holding lots of unstructured data will be needed. For economic reasons, as AI and some HPC projects are still in proof of concept phases, it will be useful to start out small and be able to scale capacity and performance fast and on independent vectors, if need be.

The PowerScale all-flash arrays running the OneFS file system from Dell Technologies fit this storage profile. The base system comes in a three-node configuration that has up to 11 TB of raw storage and a modest price under six figures, and has been tested in the labs up to 250 nodes in a shared storage cluster that can hold up to 96 PB of data. And Dell Technologies has customers running PowerScale arrays at a much higher scale than this, by the way, but they often spawn separate clusters to reduce the potential blast area of an outage. Which is extremely rare.

PowerScale can be deployed on-premises or it can be extended into a number of public clouds with multi-cloud or native cloud integrated options where customers can take advantage of additional compute or other native cloud services.

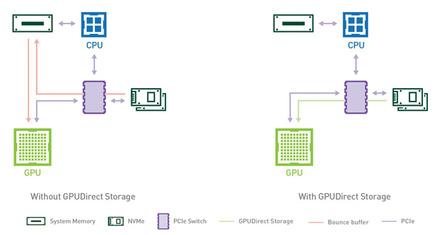

Performance is the other part of scale that companies need to consider, and this is particularly important when the systems are being accelerated by GPUs. Ever since the early days of GPU compute, NVIDIA has worked to get the CPU and its memory out of the way and to keep it from becoming the bottleneck that keeps GPUs from sharing data (GPUDirect) as they run their simulations or build their models or that keeps GPUs from accessing storage lightning fast (GPUDirect Storage).

If external storage is a necessity for such GPU accelerated systems – there is no way servers with four or eight GPUs will have enough storage to hold the datasets that most HPC and AI applications process – then it seems clear that whatever that storage is has to speak GPUDirect Storage and speak it fast.

The previous record holder was Pavilion Data, which tested a 2.2 PB storage array and was able to read data into a DGX-A100 system based on the new “Ampere” A100 GPUs at 191 GB/sec in file mode. In the lab, Dell Technologies is putting the finishing touches on its GPUDirect Storage benchmark tests running on PowerScale arrays and says it can push the performance considerably higher, at least to 252 GB/sec. And since PowerScale can scale to 252 nodes in a single namespace, it doesn’t stop there and can scale far beyond that if needed.

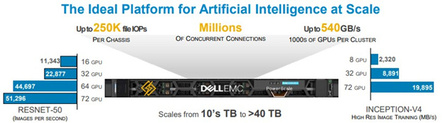

“The point is, we know how to optimize for these GPU compute environments,” says Henderson. And here is a more general statement about the performance of GPU-accelerated systems running AI workloads and how PowerScale storage performs:

The breadth of support for various kinds of systems is another thing to consider while architecting a hybrid CPU-GPU system. The very nature of shared storage is to be shared, and it is important to be able to use the data on the shared storage for other applications. The PowerScale arrays have been integrated with over 250 applications and are certified as supported on many kinds of systems. This is one of the reasons that Isilon and PowerScale storage has over 15,000 customers worldwide.

High performance computing is about more than performance, particularly in an enterprise environment where resources are constrained and having control of systems and data is absolutely critical. So the next thing that must be considered in architecting the storage for GPU-accelerated systems is storage management.

Tooled up

On this front, Dell Technologies brings a number of tools to the party. The first is InsightIQ, which does very specific and detailed storage monitoring and reporting for PowerScale and its predecessor, the Isilon storage array.

Another tool is called CloudIQ, which uses machine learning and predictive analytics techniques that monitors and helps manage the full range of Dell Technologies infrastructure products, including PowerStore, PowerMax, PowerScale, PowerVault, Unity XT, XtremIO, and SC Series, as well as PowerEdge Servers and converged and hyperconverged platforms such as VxBlock, VxRail, and PowerFlex.

And finally, there is DataIQ, a storage monitoring and dataset management software for unstructured data which provides a unified view of unstructured datasets across PowerScale, PowerMax, and PowerStore arrays as well as cloud storage from the big public clouds. DataIQ doesn’t just show you the unstructured datasets but also keeps track of how they are used and moves them to the most appropriate storage, for example, on-premises file systems or cloud-based object storage.

The last consideration is reliability and data protection, which go hand in hand in any enterprise-grade storage platform. The PowerScale arrays have their heritage in Isilon and its OneFS file system, which has been around for a long time, and which has been trusted in enterprise, government, and academic HPC institutions for two decades. OneFS and its underlying PowerScale hardware are designed to deliver up to 99.9999 percent availability, while most cloud storage services that handle unstructured data are lucky to have service agreements for 99.9 percent availability. The former has 31 seconds of downtime a year, while the latter is offline eight hours and 46 minutes.

Moreover, PowerScale is designed to give good performance and maintain data access even if some of the nodes in the storage cluster are down for maintenance or repairing themselves after a component failure. (Component failures are unavoidable for all IT equipment, after all.)

But there is another kind of resiliency that is becoming increasingly important these days: recovery from ransomware attacks.

“We have API-integrated ransomware protection for PowerScale that will detect suspicious behavior on the OneFS file system and alert administrators about it,” says Henderson. “And a lot of our customers are implementing a physically separate, air-gapped cluster setup to maintain a separate copy of all of their data. In the event of a cyberattack, you just shut down the production storage and you have your data, and you are not trying to restore from backups or archives, which could take days or weeks – particularly if you are restoring from cloud archives. Once you are talking about petabytes of data, that could take months.

"We can restore quickly, at storage replication speeds, which is very, very fast. And you have options to host your ransomware defender solution in multi-cloud environments where you can recover your data from a cyber event leveraging a public cloud."

Sponsored by Dell.