This article is more than 1 year old

Microsoft details 'planet-scale' AI infrastructure packing 100,000-plus GPUs

'Singularity' uses novel scheduling to wring more work out of infrastructure

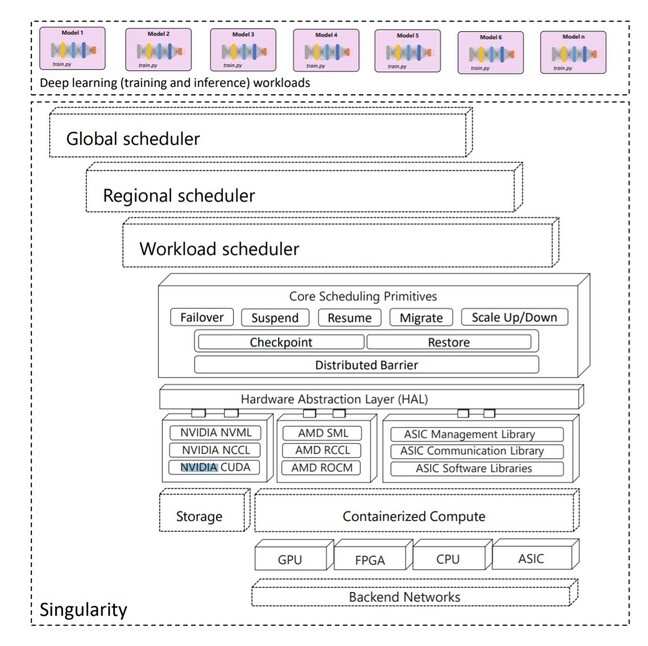

Microsoft has revealed it operates a planet-scale distributed scheduling service for AI workloads that it has modestly dubbed "Singularity".

Described in a pre-press paper [PDF] co-authored by 26 Microsoft employees, Singularity's aim is described as helping the software giant control costs by driving high utilization for deep learning workloads.

Singularity achieves that goal with what the paper describes as a "novel workload-aware scheduler that can transparently preempt and elastically scale deep learning workloads to drive high utilization without impacting their correctness or performance, across a global fleet of AI accelerators (e.g., GPUs, FPGAs)."

The paper spends more time on the scheduler than on Singularity itself, but does offer some figures to depict the system's architecture. An analysis of Singularity's performance mentions a test run on Nvidia DGX-2 servers using a Xeon Platinum 8168 with two sockets of 20 cores each, eight V100 Model GPUs per server, 692GB of RAM, and networked over InfiniBand. With hundreds of thousands of GPUs in the Singularity fleet, plus FPGAs and possibly other accelerators, Microsoft has at least tens of thousands of such servers!

The paper focuses on Singularity's scaling tech and schedulers, which it asserts are its secret sauce because they reduce cost and increase reliability.

The software automatically decouples jobs from accelerator resources, which means when jobs scale up or down "we simply change the number of devices the workers are mapped to: this is completely transparent to the user, as the world-size (i.e. total number of workers) of the job remains the same regardless of the number of physical devices running the job."

That's possible thanks to "a novel technique called replica splicing that makes it possible to time-slice multiple workers on the same device with negligible overhead, while enabling each worker to use the entire device memory."

- Google reveals how its Borg clusters have evolved yet still only use about 60 percent of resources (Alibaba might do better)

- AWS to build 32 more small clouds around the world

- Netflix reveals massive migration to new mix of microservices, asynchronous workflows and serverless functions

- IBM Cloud to offer Z-series mainframes for first time – albeit for test and dev

Making that happen requires what the authors term a "device proxy" that "runs in its own address space and has a one-to-one correspondence to a physical accelerator device. When a job worker initiates device APIs, they are intercepted and sent over the shared memory to the device proxy process that runs in a separate address space, and whose lifetime is decoupled from the lifetime of the worker process."

The above makes it possible to schedule more jobs, more efficiently, so the thousands of servers are in service for more time. It also enables swift scaling, up or down, without disruption.

"Singularity achieves a significant breakthrough in scheduling deep learning workloads, converting niche features such as elasticity into mainstream, always-on features that the scheduler can rely on for implementing stringent SLAs," the paper concludes.

Sadly the paper makes no mention of Microsoft's own research or techniques being shared openly, but does shine a light into the company's AI operations. ®