This article is more than 1 year old

If you want to connect GPUs direct to SSDs for a speed boost, this could be it

Go away, CPU, you're not needed here ... mostly

Nvidia, IBM, and university collaborators have a developed an architecture they say will provide fast fine-grain access to large amounts of data storage for GPU-accelerated applications, such as analytics and machine-learning training.

Dubbed Big accelerator Memory, aka BaM, this is an interesting attempt to reduce the reliance of Nvidia graphics processors and similar hardware accelerators on general-purpose chips when it comes to accessing storage, which could improve capacity and performance.

"The goal of BaM is to extend GPU memory capacity and enhance the effective storage access bandwidth while providing high-level abstractions for the GPU threads to easily make on-demand, fine-grain access to massive data structures in the extended memory hierarchy," reads a paper written by the team describing their design.

BaM is a step by Nvidia to move conventional CPU-centric tasks to GPU cores. Rather than relying on things like virtual address translation, page-fault-based on-demand loading of data, and other traditional CPU-centric mechanisms for handling large amounts of information, BaM instead provides software and a hardware architecture that allows Nvidia GPUs to fetch data direct from memory and storage and process it without needing a CPU core to orchestrate it.

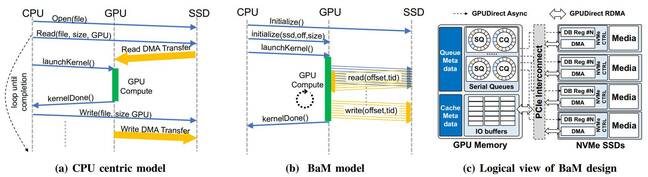

BaM has two main parts: a software-managed cache of GPU memory; and a software library for GPU threads to request data straight from NVMe SSDs by talking directly to the drives. The job of moving information between storage and GPU is handled by the threads on the GPU cores, using RDMA, PCIe interfaces, and a custom Linux kernel driver that allows SSDs to read and write GPU memory directly when needed. Commands for the drives are queued up by the GPU threads if the requested data isn't in the software-managed cache.

This means algorithms running on the GPU to perform intensive workloads can get at the information they need quickly and – crucially – in a way that's optimized for their data access patterns. See the aforementioned paper for the full technical details.

Diagrams from the paper comparing the traditional CPU-centric approach to accessing storage (a) to the GPU-led BaM approach (b) and how said GPU would be physically wired to the storage devices (c). Source: Qureshi et al. Click to enlarge

The researchers tested a prototype Linux-powered BaM system using off-the-shelf GPUs and NVMe SSDs to demonstrate it is a viable alternative to today's approach of having the host processor direct everything. Storage access can be parallelized, synchronization hurdles are removed, and I/O bandwidth is more efficiently used to boost application performance, we're told.

“A CPU-centric strategy causes excessive CPU-GPU synchronization overhead and/or I/O traffic amplification, diminishing the effective storage bandwidth for emerging applications with fine-grain data-dependent access patterns like graph and data analytics, recommender systems, and graph neural networks,” the researchers stated in their paper this month.

- Why Nvidia sees a future in software and services: Recurring revenue

- Nvidia, Apple noticeably absent from Intel-led chiplet interconnect collaboration

- Intel reveals GPU roadmap with hybrid integrated discrete graphics

- 2021 in storage: We waited for a flash price revolution that never came. But about creativity? We can't complain

“With the software cache, BaM does not rely on virtual memory address translation and thus does not suffer from serialization events like TLB misses,” the authors, including Nvidia's chief scientist Bill Dally, who previously led Stanford's computer science department, noted.

"BaM provides a user-level library of highly concurrent NVMe submission/completion queues in GPU memory that enables GPU threads whose on-demand accesses miss from the software cache to make storage accesses in a high-throughput manner," they continued. "This user-level approach incurs little software overhead for each storage access and supports a high-degree of thread-level parallelism."

The team plans to open-source details of their hardware and software optimization for others to build such systems. We're reminded of AMD's Radeon Solid State Graphics (SSG) card that placed flash next to a GPU. ®