This article is more than 1 year old

AMD: Our latest, pricier mega-cache Epyc processors leapfrog Intel’s

Refreshed third-gen 'Milan-X' sports 3D vertical L3 memory

AMD has announced its latest, pricier Epyc server processors, code-named Milan-X, to extend the chip giant's lead over Intel for technical computing applications. The key to the appeal is driven by a massive amount of cache fused in, a major jump for HPC and other demanding areas.

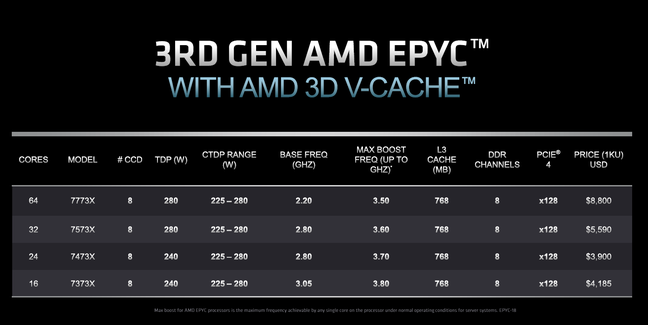

The four new microprocessors range from 16 to 64 CPU cores, and represent a refresh of AMD's third-generation Epyc chips that debuted last year. Crucially, they bring the cache: an unprecedented 768MB to L3, significantly more than the 256MB found in 2021's comparable devices.

The company claimed this extra cache allows the new Epyc chips to perform anywhere from 23-88 percent faster than Intel's latest Xeon processors for servers running technical computing apps from major vendors Ansys and Altair. AMD said it can also significantly reduce the number of servers and electricity needed to perform the same amount of work.

But in exchange, the bulk pricing for such chips come at a "modest premium" over Epyc processors with similar attributes, with the additional cost ranging from $664 to $1,000. We suspect these premiums may be passed on by server makers, such as Dell, Hewlett Packard Enterprise, Lenovo, Supermicro and others that are offering Milan-X chips at launch. Microsoft Azure is also offering the new chips, which will replace last year's Epyc CPUs for its Azure HBv3 virtual machines.

The transition should be rather seamless, however, since the new chips are compatible with AMD's existing platform for third-gen Epyc processors. That means there should be no problems in running software on the new chips, and server makers and cloud providers will only have to update the BIOS for existing server builds.

Ram Peddibhotla, AMD's corporate vice president of Epyc product management, told The Register the performance and cost savings benefits of the Milan-X chips greatly outweigh their higher price.

"There is a tremendous value that's driven by the size of the cache that, in turn, drives the size of the performance gains. So I think that modest premium is more than paid off by that acceleration in performance," he said.

The monster cache comes courtesy of AMD's 3D V-Cache technology, which is an early use-case of the company's new 3D die stacking technology. It's why the new lineup carries the somewhat awkward name of "third-gen AMD Epyc processors with AMD 3D V-Cache technology."

The technology, which is also found in AMD's forthcoming Ryzen 7 5800X3D CPU for PC gaming, brings an extra 96MB of L3 cache for every group of cores on the processor, also known as the core complex dies. That extra 96MB is triple the L3 cache of what's found in each core complex of the original third-gen Epyc processors released last year. And with the four new Milan-X processors each sporting eight core complexes, the total L3 cache comes to 768MB per CPU.

What Peddibhotla said is especially neat is the flexibility in how the 96MB of L3 cache can be shared among the cores on each core complex. For the new 64-core EPUC 7773X, if an application calls for using all eight cores on each core complex, each core will have access to 12MB of cache. But if an application only needs one core in a complex, it can be allowed to access all 96MB on that core complex, which means the lower core count models naturally have a higher cache-per-core ratio at any time.

"That really goes a long way in lightly threaded applications," he said.

The L3 cache is the only big difference between the four new processors and comparable "F series" models from last year that have higher frequencies. They come with the same Zen 3 architecture on the same 7nm manufacturing process, with support for PCIe Gen4 connectivity and up to eight channels of DDR4-3200 memory. They also have the same thermal envelopes and silicon-based security features like Secure Memory Encryption.

But Peddibhotla emphasized that the massive L3 cache has big benefits for technical computing, allowing two-socket servers to sport more than 1.5GB in total L3 cache. We'll be honest: that last fact actually made Peddibhotla a little giddy.

"Every time I say that, I look back and say, holy cow, I don't think I ever expected to say 'gigabyte' and 'cache' in the same sentence," he said.

Peddibhotla wanted to make clear that the extra cache won't have an impact on applications that aren't cache-sensitive, of which there are many in the data center market.

- AlmaLinux OS Foundation welcomes AMD to the fold

- If you want to make your own chip and aren't Microsoft rich, who do you turn to?

- AMD to Intel: Take our GPU talent? Two can play that game

- SiFive bags $175m to further challenge Arm with RISC-V

But crucially, he said, the technical computing apps AMD is targeting with the new Milan-X chips are essential to many businesses, from auto manufacturers to even chip houses like AMD. Other industries that use this kind of software include chemical engineering, finance, energy and the life sciences.

"These are the most complex and demanding workloads in the data center. And these are actually typically the key thrust of the product design that is core of the business in the enterprise. So technical computing, workloads are really not support applications. They're not ancillary. They really go to the essence of what the companies actually do," he said.

These applications include electronic design automation, which is used for chip design, and computational fluid dynamics, which is used to design things like planes, trains and automobiles. The company is also going after finite element analysis and structural analysis with the new Milan-X chips.

To give us an idea of how the extra cache can make a difference, AMD provided an example of a server running Synopsys' VCS software that is used for chip design. A server using last year's 16-core Epyc 73F3 was able to perform 24.4 jobs per hour while a server with the new 16-core Epyc 7373X could do 40.6 jobs per hour, resulting in 66 percent faster performance.

AMD also provided competitive comparisons with Intel's third-gen Xeon processors that came last year. Compared to Intel's top line, 40-core Xeon 8380, AMD's new 64-core Epyc 7773X provided performance that was up to 44 percent better using Altair Radioss for structural analysis, up to 47 percent better using Ansys Fluent for fluid dynamics, up to 69 percent better using Ansys LS-DYNA for finite element analysis and up to 96 percent better using Ansys CFX for fluid dynamics.

Applications for structural analysis, fluid dynamics and finite element analysis can perform better with more cores, which is one reason besides the bigger cache that AMD's new 64-core CPU had such higher performance, according to Peddibhotla.

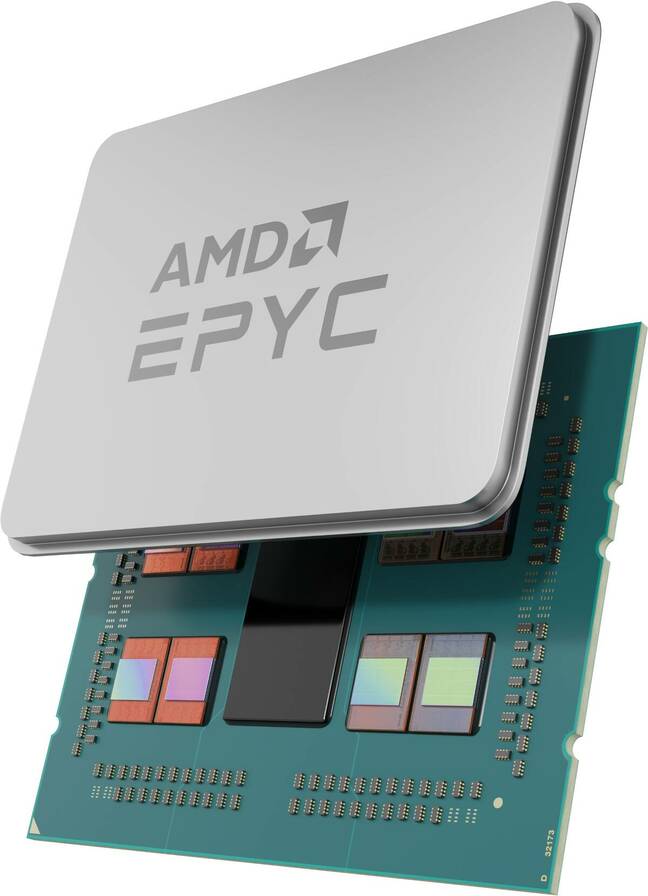

AMD's rendering of its latest Epyc processor. The central silicon die in the package is the IO controller, and the dies around it are the CPU core complexes

The company showed that its processors could compete core-for-core against Intel. Compared to Intel's 32-core Xeon 8352, AMD's new 32-core Epyc 7573X could perform up 23 percent better using Ansys Fluent, up to 37 percent better using Altair Radioss, up to 47 percent better using Ansys LS-DYNA and up to 88 percent better using Ansys CFX.

Some applications, like electronic design automation and certain fluid dynamics software, are more dependent on the sheer horsepower, measured in gigahertz, rather than core count, which is why the company is also releasing the 16-core Epyc 7373X and the 24-core Epyc 7473X. The company did not provide competitive performance comparisons for these models, however.

Peddibhotla said the performance advantages of AMD's new cache-rich chips can translate into "incredible savings and acquisition cost."

In an example drawn up by AMD, the company said 10 servers full of the new 32-core Epyc 7573X could perform the same amount of work by 20 servers full of Intel's 32-core Xeon 8362. That means AMD can essentially reduce the amount of servers and electricity required by roughly half, according to the company, which can also lower the total of upfront and ongoing costs by 51 percent for three years.

Peddibhotla pitched this as a way for companies up their competition, save money and even meet corporate sustainability goals that are in vogue.

"A company that adopts Milan-X can, of course, just reap those cost savings, but they can also plow those right back into their business to give their designers a lot more performance and an ability to run many more design cycles and that, in turn, will lead to better quality products are faster time to market," he said.

"That's the key for us: When you provide this type of cost savings and this type of design acceleration, that translates directly to the competitive advantage for these companies." ®

Rent now, maybe buy later?

Microsoft Azure is the first among the major clouds to announce it is offering AMD's Milan-X processors as a service, Brandon Vigliarolo writes.

The Windows giant said on Monday the silicon is generally available in its HBv3 virtual machines.

"Microsoft and AMD share a vision of a new era of high-performance computing," said Microsoft Azure CTO Mark Russinovich. "One defined by rapid improvement to Azure's HPC and AI platforms."

He also mentioned a number of use cases for the 3D V-Cache-enabled HBv3 machines, from fluid dynamics and weather simulation, to AI inference training and energy research.

Azure subscribers can spin up Milan-X chips in the cloud today, provided they're in the East US, South Central US, or West Europe regions. Those in UK South, Southeast Asia, China North 3, and Central India will have to wait until Q2 2022. If you're not in one of those regions, sorry: you probably won't be getting this unless Microsoft extends it further.