This article is more than 1 year old

Google says it would release its photorealistic DALL-E 2 rival – but this AI is too prejudiced for you to use

It has this weird habit of drawing stereotyped White people, team admit

DALL·E 2 may have to cede its throne as the most impressive image-generating AI to Google, which has revealed its own text-to-image model called Imagen.

Like OpenAI's DALL·E 2, Google's system outputs images of stuff based on written prompts from users. Ask it for a vulture flying off with a laptop in its claws and you'll perhaps get just that, all generated on the fly.

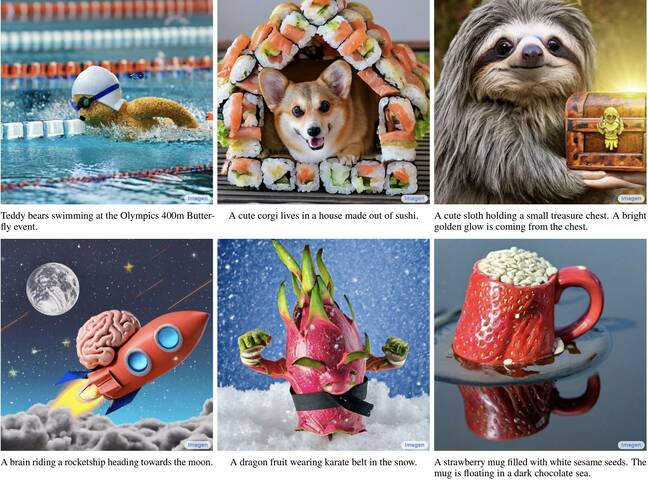

A quick glance at Imagen's website shows off some of the pictures it's created (and Google has carefully curated), such as a blue jay perched on a pile of macarons, a robot couple enjoying wine in front of the Eiffel Tower, or Imagen's own name sprouting from a book. According to the team, "human raters exceedingly prefer Imagen over all other models in both image-text alignment and image fidelity," but they would say that, wouldn't they.

Imagen comes from Google Research's Brain Team, who claim the AI achieved an unprecedented level of photorealism thanks to a combination of transformer and image diffusion models. When tested against similar models, such as DALL·E 2 and VQ-GAN+CLIP, the team said Imagen blew the lot out of the water. DrawBench, a list of 200 prompts used to benchmark the models, was built in-house.

Imagen's work, with prompts ... Source: Google

Imagen's designers say that their key breakthrough was in the training stage of their model. Their work, the team said, shows how effective large, frozen pre-trained language models can be as text encoders. Scaling that language model, they found, had far more impact on performance than scaling Imagen's other components.

"Our observation … encourages future research directions on exploring even bigger language models as text encoders," the team wrote.

Unfortunately for those hoping to take a crack at Imagen, the team that created it said it isn't releasing its code nor a public demo, for several reasons.

For example, Imagen isn't good at generating human faces. In experiments with pictures including human faces, Imagen only received a 39.2 percent preference from human raters over reference images. When human faces were removed, that number jumped to 43.9 percent.

Unfortunately, Google didn't provide any Imagen-generated human pictures, so it's impossible to tell how they compare to those generated by platforms like This Person Does Not Exist, which uses a general adversarial network to generate faces.

Aside from technical concerns, and more importantly, Imagen's creators found that it's a bit racist and sexist even though they tried to prevent such biases.

- OpenAI's DALL·E 2 generates AI images that are sometimes biased or NSFW

- Fake it until you make it: Can synthetic data help train your AI model?

- 1,000-plus AI-generated LinkedIn faces uncovered

- AI really can't copyright the art it generates – US officials

Imagen showed "an overall bias towards generating images of people with lighter skin tones and … portraying different professions to align with Western gender stereotypes," the team wrote. Eliminating humans didn't help much, either: "Imagen encodes a range of social and cultural biases when generating images of activities, events and objects."

Like similar AIs, Imagen was trained on image-text pairs scraped from the internet into publicly available datasets like COCO and LAION-400M. The Imagen team said it filtered a subset of the data to remove noise and offensive content, though an audit of the LAION dataset "uncovered a wide range of inappropriate content including pornographic imagery, racist slurs, and harmful social stereotypes."

Bias in machine learning is a well-known issue: Twitter's image cropping and Google's computer vision are just a couple that have been singled out for playing into stereotypes that are coded into the data we produce.

"There are a multitude of data challenges that must be addressed before text-to-image models like Imagen can be safely integrated into user-facing applications … We strongly caution against the use of text-to-image generation methods for any user-facing tools without close care and attention to the contents of the training dataset," Imagen's creators said. ®