This article is more than 1 year old

Thanks to generative AI, catching fraud science is going to be this much harder

Why do experiments and all that work when a model could just invent convincing data for you?

Feature Generative AI poses interesting challenges for academic publishers tackling fraud in science papers as the technology shows the potential to fool human peer review.

Describe an image for DALL-E, Stable Diffusion, and Midjourney, and they'll generate one in seconds. These text-to-picture systems have rapidly improved over the past few years, and what initially began as a research prototype, producing benign and wonderfully bizarre illustrations of baby daikon radishes walking dogs in 2021, has since morphed into commercial software, built by billion-dollar companies, capable of generating increasingly realistic images.

These AI models can produce lifelike pictures of human faces, objects, and scenes, and it's a matter of time before they get good at creating convincing scientific images and data, too. Text-to-image models are now widely accessible, pretty cheap to use, and they could help dodgy scientists forge results and publish sham research more easily.

Image manipulation is already a top concern for academic publishers as it's the most common form of scientific misconduct of late. Authors can use all sorts of tricks, such as flipping, rotating, or cropping parts of the same image to fake findings. Editors are fooled into believing the results being presented are real and will publish the work.

Various publishers are now turning to AI software in an attempt to detect signs of image manipulation during the review process. In most cases, images have been mistakenly duplicated or arranged by scientists who have muddled up their data, but sometimes it's used for blatant fraud.

But just as publishers begin to get a grip on manual image manipulation, another threat is emerging. Some researchers may be tempted to use generative AI models to create brand-new fake data rather than altering existing photos and scans. In fact, there is evidence to suggest that sham scientists may be doing this already.

AI-made images spotted in papers?

In 2019, DARPA launched its Semantic Forensics (SemaFor) program, funding researchers developing forensic tools capable of detecting AI-made media, to combat disinformation.

A spokesperson for Uncle Sam's defense research agency confirmed it has spotted fake medical images in published science papers that appear to be generated using AI. Before text-to-image models, generative adversarial networks were popular. DARPA realized these models, best known for their ability to create deepfakes, could also forge images of medical scans, photo of cells, or other types of imagery often found in biomedical studies.

"The threat landscape is moving quite rapidly," William Corvey, SemaFor's program manager, told The Register. "The technology is becoming ubiquitous for benign purposes."

Corvey said the agency has had some success developing software capable of detecting GAN-made images, and the tools are still under development.

The threat landscape is moving quite rapidly

"We have results that suggest you can detect 'siblings or distant cousins' of the generative mechanism you've learned to detect previously, irrespective of the content of the generated images. SemaFor analytics look at a variety of attributions and details associated with manipulated media, everything from metadata, statistical anomalies, to more visual representations," he said.

Some image analysts scrutinizing data in scientific papers have also come across what look like GAN-generated images. A GAN being a generative adversarial network, a type of machine-learning system that can conjure up writing, music, pictures, and more.

For instance, Jennifer Byrne, a professor of molecular oncology at the University of Sydney, and Jana Christopher, an image integrity analyst for journal publisher EMBO Press, came across a strange set of images that appeared in 17 bio-chemistry-related studies.

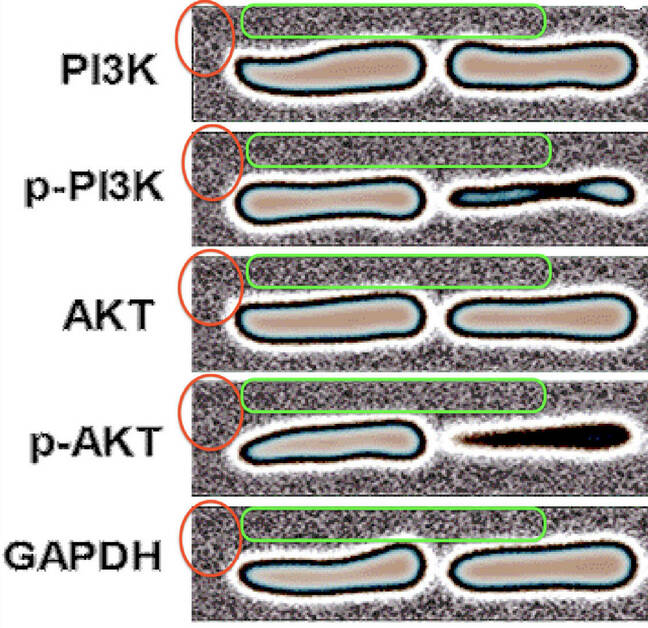

The pictures depicted a series of bands commonly known as western blots, which indicate the presence of specific proteins in a sample, that all curiously seemed to have the same background. That's not supposed to happen.

Examples of repeating backgrounds in western blot images, highlighted by the red and green outlines ... Source: Byrne, Christopher 2020

In 2020, Byrne and Christopher came to the conclusion that the suspicious-looking images may have been produced as part of a paper mill operation: an effort to mass produce bio-chemical papers using faked data, and get them peer reviewed and published. Such a caper might be pulled off to, say, benefit academics who are compensated based on their accepted paper output, or to help a department hit a quota of published reports.

"The blots in the example shown in our paper are most likely computer-generated," Christopher told The Register.

I often come across fake-looking images, predominantly western blots, but increasingly also microscopy images

"Screening papers both pre- and post-publication, I often come across fake-looking images, predominantly western blots, but increasingly also microscopy images. I am very aware that many of these are most likely generated using GANs."

Elisabeth Bik, a freelance image sleuth, can often tell when images have been manipulated. She pores over scientific paper manuscripts, hunting for manipulated pictures, and flags these issues for journal editors to examine further. But it's harder to combat fake images when they have been comprehensively generated by an algorithm.

She pointed out that although the repeated background in images highlighted in the Byrne and Christopher's study is a telltale sign of potential forgery, the actual western blots themselves are unique. The computer vision software Bik uses to scan papers and spot image fraud would find it hard to flag these bands because there is no obvious duplication of the actual blots.

"We'll never find an overlap. They're all, I believe, artificially made. How exactly, I'm not sure," she told The Register.

It's easier to generate fake images with the latest generative AI models

GANs have largely been displaced by diffusion models. These systems produce unique pictures and power today's text-to-image software, including DALL-E, Stable Diffusion, and Midjourney. The software learns to map the visual representation of objects and concepts to natural language, and could significantly lower the barrier for academic cheating.

Scientists can just describe what type of false data they want generated to suit their conclusions, and these tools will do it for them. At the moment, however, they can't quite create realistic-looking scientific images, yet. Sometimes the tools produce clusters of cells that look convincing at first glance, but fail miserably when it comes to western blots.

This is the sort of thing these AI programs can generate:

Here’s what @OpenAI’s DALL-E does with biological cell prompts

— Tara Basu Trivedi (@tbt94) August 23, 2022

Specifically: “cells under a microscope” and “T-cells under a scanning electron microscope” pic.twitter.com/BgcZr3k5Q5

William Gibson – a physician-scientist and medical oncology fellow, not the famous author – has further examples here, including how today's models struggle with the concept of a western blot.

The technology is only getting better, however, as developers train larger models on more data.

David Bimler, another expert at recognizing image manipulation in science papers, better known as Smut Clyde, told us: "Papermillers will illustrate their products using whatever method is cheapest and fastest, relying on weaknesses in the peer-review process."

"They could simply copy [western blots] from older papers but even that involves work to search through old papers. At the moment, I suspect, using a GAN is still some effort. Though that will change," he added.

- Waymo robo taxis rack up a million miles without killing anyone

- China leads the world in tech research, could win the future, says think tank

- Why ChatGPT should be considered a malevolent AI – and be destroyed

- Quantum of solace: Rigetti to cut workforce as it faces Nasdaq delisting

DARPA is now looking to expand its SemaFor program to study text-to-image systems. "These kinds of models are fairly new and while in scope, are not part of our current work on SemaFor," Corvey said.

"However, SemaFor evaluators are likely to look at these models during the next evaluation phase of the program beginning Fall 2023."

Meanwhile, the quality of scientific research will erode if academic publishers can't find ways to detect fake AI-generated images in papers. In the best-case scenario, this form of academic fraud will be limited to just paper mill schemes that don't receive much attention anyway. In the worst-case scenario, it will impact even the most reputable journals and scientists with good intentions will waste time and money chasing false ideas they believe to be true. ®