Look mom, no InifiniBand: Nvidia’s DGX GH200 glues 256 superchips with NVLink

Unless you need more nodes

Computex Nvidia unveiled its latest party trick at Computex in Taipei: stitching together 256 Grace-Hopper superchips into an "AI supercomputer" using nothing but NVLink.

The kit, dubbed the DGX GH200, is being offered as a single system tuned for memory-intensive AI models for natural language processing (NPM) recommender systems, and graph neural networks.

In a press briefing ahead of CEO Jensen Huang's keynote, executives compared the GH200 to the biz's recently launched DGX H100 server, claiming up to 500x higher memory. However the two are nothing alike. The DGX H100 is an 8U system with dual Intel Xeons and eight H100 GPUs and about as many NICs. The DGX GH200, is a 24-rack cluster built on an all-Nvidia architecture — so not exactly comparable.

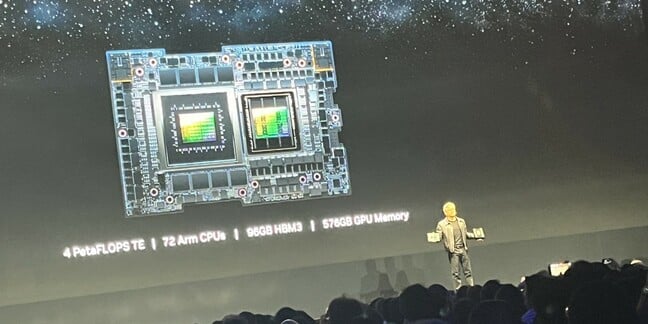

At the heart of this super-system is Nvidia's Grace-Hopper chip. Unveiled at its March GTC event in 2022, the hardware blends a 72-core Arm-compatible Grace CPU cluster and 512GB of LPDDR5X memory with an 96GB GH100 Hopper GPU die using the company's 900GBps NVLink-C2C interface. If you need a refresher on this next-gen compute architecture, check out our sister site The Next Platform for a deeper dive into that silicon.

According to Nvidia VP of Accelerated Computing Ian Buck, the DGX GH200 features 16 compute racks each with 16 nodes equipped with a superchip. In total, DGX GH200 platform boasts 18,432 cores, 256 GPUs, and a claimed 144TB of "unified" memory.

At first blush, this is great news for those looking to run very-large models which need to be stored in memory. As we've previously reported, LLMs need a lot of memory butin this case that 144TB figure may be stretching the truth a bit. Only about 20TB of that is the super-speedy HBM3 that's typically used to store model parameters. The other 124TBs is DRAM.

In scenarios where a workload can't fit within the GPUs vRAM, it typically ends up spilling over to the much slower DRAM, which is further bottlenecked by the need to copy files over a PCIe interface. This, obviously, isn't great for performance. But, it appears Nvidia is getting around this limitation by using a combination of very fast LPDDR5X memory good for half a terabyte per second of bandwidth and NVLink rather than PCIe.

Speaking at the COMPUTEX 2023 conference in Taiwan today, Nvidia boss Jensen Huang compared Grace-Hopper to his company's H100mega-GPU. He conceded that the H100 has more power than Grace-Hopper. But he pointed out that Grace-Hopper has more memory than the H100, so is more efficient and therefore more applicable to many data centers.

"Plug this into your DC and you can scale out AI", he said.

Gluing it all together

On that topic, Nvidia isn't just using NVLink for GPU-to-GPU communications, it's also using it to glue together the system's 256 nodes. According to Nvidia, this will allow very large language models (LLMs) to spread across the systems' 256 nodes while avoiding network bottlenecks.

The downside to using NVLink is, at least for now, it can't scale beyond 256 nodes. This means for larger clusters you're still going to be looking at something like InfiniBand or Ethernet — more on that later.

Despite this limitation, Nvidia is still claiming pretty substantial speedups for a variety of workloads including natural language processing, recommender systems, and graph neural networks, compared to a cluster of more conventional DGX H100s using InfiniBand.

In total, Nvidia says a single DGX GH200 cluster is capable of delivering peak performance of about an exaflop. In a pure HPC workload, performance is going to be far less. Nvidia's head of accelerated compute estimates peak performance in a FP64 workload at about 17.15 petaflops when taking advantage of the GPU's tensor cores.

If the company can achieve a reasonable fraction of this in the LINPACK benchmark, that would place a single DGX GH200 cluster in the top 50 fastest supercomputers.

- Nvidia GPUs fly out of the fabs – and right back into them

- Intel abandons XPU plan to cram CPU, GPU, memory into one package

- Microsoft rains more machine learning on Azure cloud

- When it comes to liquid and immersion cooling, Nvidia asks: Why not both?

Thermals dictate design

Nvidia didn't address our questions on thermal management or power consumption, but given the cluster's compute density and intended audience, we're almost certainly looking at an air-cooled system.

Even without going to liquid or immersion cooling, something the company is looking into, Nvidia could have made the cluster much more compact.

At Computex last year, the company showed off a 2U HGX reference design with twin Grace-Hopper superchip blades. Using these chassis Nvidia could have managed to pack all 256 chips into eight racks.

We suspect Nvidia shied away from this due to datacenter power and cooling limitations. Remember, Nvidia's customers still need to deploy the cluster in their datacenters, and if they need to make major infrastructure changes, it's going to be a tough sell.

Nvidia's Grace-Hopper chip alone requires about a kilowatt of power. So without factoring in motherboard and networking consumption, you're looking at cooling about 16 kilowatts per rack, just for the compute. This is already going to be a lot for many datacenter operators used to cooling 6-10 kilowatt racks, but at least within the realm of reason.

Considering the cluster is being sold as a unit, we suspect the kinds of customers considering the DGX GH200 is also taking thermal management and power consumption into consideration. According to Nvidia, Meta, Microsoft, and Google are already fielding the clusters, with general availability slated for before the end of 2023.

Jensen Huang Launching the DH200 on stage at Computex 2023. Yes, we should have brought a better camera - Click to enlarge

Scaling out with Helios

We mentioned earlier that in order to scale out the DGX GH100 beyond 256 nodes, customers would need to resort to more traditional networking methods, and that's exactly what Nvidia aims to demonstrate with its upcoming Helios "AI supercomputer."

While details are pretty thin at this point, it looks like Helios is essentially just four DGX GH200 clusters glued together using the company's 400Gbps Quantum-2 InfiniBand switches.

While we're talking switches, at COMPUTEX Huang announced the SPECTRUM-4, a colossal switch that marries Ethernet and InfiniBand, with a 400GB/s BlueField 3 SmartNIC. Huang said the switch and the new SmartNIC will together allow AI traffic to flow thorugh data centers and bypass the CPU, avoiding bottlenecks along the way. The Register will seek more details as they become available.

Helios is expected to come online by the end of the year. And while Nvidia emphasizes its AI performance in FP8, the system should be able to deliver peak performance of somewhere in the neighborhood of 68 petaflops. This would put it roughly on par with France's Adastra system, which, as of last week, holds the No. 12 spot on the Top500 ranking. ®

- With Simon Sharwood.