This article is more than 1 year old

Sysadmin chatbots: We have the technology

Hey Alexa, manage my system!

Storage Blockhead Chatbots are flashing up in our future view as something that could improve an admin's lot. Instead of using a GUI with nested and drill-down screen forms to do their job, they'll have a new form of Command Line Interface, only this will be a Chat Line Interface to a chatbot.

Array vendor Tintri is showing how this could be done with TintriBot (Tbot), actually using Slack, a realtime collaboration app. Think of it crudely as an updated Lotus Notes. It contains Slackbot, which will answer text queries.

Now, Slackbot is stupid, as this interaction shows:

Message@slackbot chris_mellor [11:46 AM] How does slackbot work

slackbot [11:46 AM] I'm sorry, I don't understand! Sometimes I have an easier time with a few simple keywords. Or you can head to our wonderful Help Centre for more assistance!

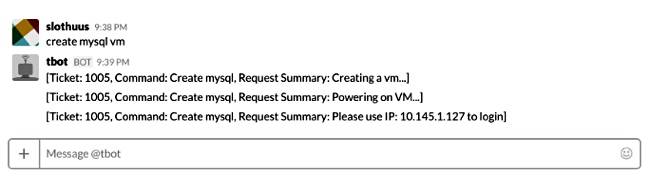

That's because Slackbot's code is limited. Tintri Bot is written so as to understand text commands in the Tintri admin universe. Here's an example from Slothuus.net:

Tbot has created a MySQL virtual machine (VM). You could tell it to create a snapshot of a VM, identified by its IP address or set a quality of service rating for a VM.

OK, so far so good. But what if you could go further in three ways. One is to speak commands to Tbot. The second is to ask Tbit the questions in speech: "Tbot-Alexa, what's the status of the T3500?"

What would we need to happen here?

Spoken commands

Assuming Amazon's Alexa interface was used, Tintri would need to supply a skill to Amazon's Alexa speech interface/output system.

Amazon states: "When a user speaks to an Alexa-enabled device, the speech is streamed to the Alexa service in the cloud. Alexa recognises the speech, determines what the user wants, and then sends a structured request to the particular skill that can fulfil the user's request. All speech recognition and conversion is handled by Alexa in the cloud."

So, to reuse the example above, Tbot-Alexa would hear speech saying: "Create MySQL VM." It would turn that into text and send it to the Tintri system, using the Tintri API, in the same way that Tbot, in Slack, sends its received text to the Tintri management software using the API.

The Tintri system creates the VM and sends status messages back to the user: "Ticket: 1005, Command Create mysql, Request Summary: Creating a VM..." which would be routed to Tbot-Alexa, which would speak the summary text. That would sound very clunky. Better to send back text to be spoken, which says:

- "MySQL VM create requested received."

- "Creating VM."

- "Powering up VM."

- "Created.Please use IP address 10.145.1, 127 to login."

Send the formal messages to Tbot-Slack or some other log file for a sequential and permanent record. You could see how this might work.

The Tbot-Alexa input device hardware could be a mobile phone or an Echo-like product, and the speech recognition AI could be Alexa, or Cortana, or Google, or Siri – it's not important to the overall design I'm playing with here. You choose the best one for the job in terms of speech recognition quality, licensing scheme, cost etc.

As far as Tbot is concerned, it gets commands from an Alexa front end and outputs responses to it. Downstream of the request IO in Tbot's code stack, nothing much changes. Asking questions, though, will be vastly different.

Questions

So we ask: "Tbot-Alexa, what's the status of the T3500?" Tbot-Alexa turns this into text and sends a command to Tintri's management software to produce a summary report which it sends back to Tbot-Alexa.

But Tbot-Alexa and the Tintri management SW have to know and agree on what "T3500" means. An IP address might have to used. The output summary report has to be crisp and to the point, with something like this spoken: "T3500 running at 77 per cent resource usage with three amber warnings."

So you ask, of course: "What are the amber warnings?"

Tbot-Alexa has to know the context here, that the amber warnings relate to your T3500 array now, and not someone else's, and that they are the current amber warnings and not historic ones. This is vastly more difficult than creating a MySQL VM. With spoken command input, Tbot-Alexa needs generally to be no more complicated than Tbot-slack.

But with spoken question input, Tbot-Alexa would need its own AI skills to understand the context of follow-up questions.

Suppose Tbot-Alexa replies to the amber warnings question with this: "Two amber warnings relate to fan under-performance and replacement parts are on the way. One has no identified cause but trend extrapolation suggests device failure in ten days."

OK, we could see how Tintri's management SW could supply the data for such output. But then there could be a follow-up question.

"Tbot-Alexa, any suggestions?"

Tintri predictive analytics software could already have mined billions of array sensor data points and have data referring to the device component in question. The question would be turned into a command and an analytic search could be run, in realtime, to provide information, which could produce an answer such as: "87 per cent of such devices have had problems when sited near the array cooling block."

A combination of Big Data product sensor data analytics and machine learning operating under a Tbot-Alexa speech interface layer could produce a usable speech interface for admin staff that could handle commands and questions and produce helpful data for admin staff.

Back-of-envelope burbling

This is just back-of-the-envelope burbling by me. Although fantasy, I don't think it's too fantastic. Is it realistic? Will we see speech-driven system management chatbots? Or is this kind of thing so far removed from telling Alexa to turn on the kitchen lights as to be ridiculous?

Question: "Hey, Tbot-Alexa, is this realistic?"

Answer: "I'm sorry, I don't understand! Sometimes I have an easier time with a few simple keywords. Or you can head to our wonderful Help Centre for more assistance!"

Great. ®