This article is more than 1 year old

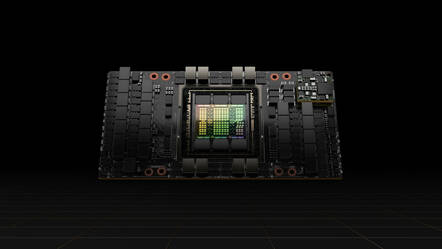

Nvidia reveals specs of latest GPU: The Hopper-based H100

Performance boost promised, power stakes raised by 300 Watts

GTC Nvidia has unveiled its H100 GPU powered by its next-generation Hopper architecture, claiming it will provide a huge AI performance leap over the two-year-old A100, speeding up massive deep learning models in a more secure environment.

The new processor is also more power-hungry than ever before, demanding up to 700 Watts for the H100's SXM form factor, which requires Nvidia's custom HGX motherboard, raising the power stakes 300 Watts higher than the thermal design of the chip designer's A100 counterpart.

After months of anticipation, the GPU giant revealed not only the Hopper-fueled H100, but also H100-powered systems and reference architectures, and plenty of other details about the datacenter-grade GPU, during CEO Jensen Huang's keynote at his corporation's virtual GTC 2022 event on Tuesday.

The 700-watt figure sounds like a lot, but Paresh Kharya, Nvidia's director of data center computing, told The Register that the H100 is still more power-efficient, offering over 3x the performance-per-watt of the A100.

Kharya based this off Nvidia's claim that the H100 SXM part, which will be complemented by PCIe form factors when it launches in the third quarter, is capable of four petaflops, or four quadrillion floating-point operations per second, for FP8, the company's new floating-point format for 8-bit math that is its stand-in for measuring AI performance.

This makes the H100 6x faster than the A100, and Kharya said the GPU offers performance multiples across higher levels of floating-point precision: 3x faster for FP16 (2 petaflops), 3x faster for Nvidia's FP32-adjacent TensorFloat32 format (1 petaflop) and 3x faster for FP64 (60 teraflops). Kharya said these stats make the H100 a heavy hitter against competitors in the AI space, including AMD, Cerebras Systems and Graphcore.

"Each H100 will have 4 petaflops of AI computing. Nothing even comes even close to that level of AI performance," he said. "And combined with our software stack and a scalable platform that goes to the full data-center-scale, we are very well-positioned to continue to deliver performance benefits to our customers."

Speeds, feeds and AI scaling dreams

The H100 is made up of 80 billion transistors using a custom 4nm process from TSMC, which Nvidia said makes the GPU the "world's most advanced chip." The GPU's architecture, Hopper, is the successor to 2020's Ampere and is named after US computer science pioneer Grace Hopper, whose first name is being used for Nvidia's first server CPU due in 2023.

Nvidia said the H100 is the first GPU to support PCIe Gen5 connectivity, which doubles the throughput of the previous generation to 128GBps. It's also the first to use the HBM3 high-bandwidth memory specification, sporting 80GB in total memory and delivering up to 3TBps of memory bandwidth, a 50 percent increase over the A100. The GPU is capable of sending data super-fast within the chip too, thanks to its nearly 5TBps of external connectivity.

- Supermicro's 'universal GPU' system welcomes all elements

- AMD to Intel: Take our GPU talent? Two can play that game

- If you want to make your own chip and aren't Microsoft rich, who do you turn to?

- If you want to connect GPUs direct to SSDs for a speed boost, this could be it

These advancements are one of six "breakthrough innovations" Nvidia is claiming for the H100, which also include a new Transformer Engine for speeding up the popular deep learning model type that powers many natural language processing workloads.

Kharya said the Transformer Engine, working in conjunction with Nvidia software, "intelligently" manages the precision of transformer models between 8-bit and 16-bit formats while maintaining accuracy to speed up the training of such models by as much 6x compared to the A100. This can reduce the time it takes to train transformer models, which can have as many as 530 billion parameters, from weeks to days, he said.

Another breakthrough comes from Nvidia's implementation of confidential computing for the H100, which the company said is a first time for a GPU. This allows the GPU, working with an Intel or AMD CPU, to create a so-called Trusted Execution Environment in a virtualized environment that is protected from the hypervisor, the operating system or anyone with physical access.

The H100's other breakthroughs include the fourth-generation Nvidia NVLink interconnect, which – when combined with an external NVLink Switch – allows up to 256 H100s to connect over a network at a bandwidth that is 9x higher than the previous generation. The GPU also comes with Nvidia's second generation of Multi-Instance GPU that can now virtualize the throughput and fully isolate each of the feature's seven GPU instances.

The final highlight are the H100's new DPX instructions, which speed up dynamic programming – a method popular for a broad range of algorithms – by as much as 40x compared to CPUs and up to 7x compared to previous-generation GPUs.

Coming to a server – or cloud – near you

Nvidia is hoping to see widespread adoption of the H100 across on-premises data centers, cloud instances and edge servers, and it promises to spur new buying cycles with a long list of server makers and cloud providers, including Amazon Web Services, Cisco, Dell Technologies, Google Cloud, Hewlett Packard Enterprise, Lenovo and Microsoft Azure.

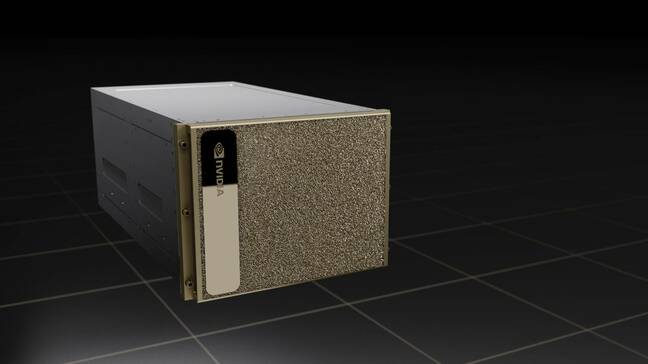

As is tradition now, Nvidia is bringing the H100 to its line of DGX systems, which are pre-loaded with Nvidia software and optimized to provide the fastest AI performance. Appropriately called the DGX H100, the new system will feature eight GPUs, making it capable of delivering 32 petaflops of AI performance with Nvidia's FP8 format, which is 6x faster than the previous generation, according to the company.

A rendering of Nvidia's new DGX H100 system

Thanks to Nvidia's new generation of NVLink Switch technology, the company can connect up to 32 DGX H100 systems in a DGX SuperPOD cluster, making it capable of one exaflop of FP8, or one quintillion floating-point calculations per second, according to the company.

The chip designer can even connect multiple 32-system clusters together, and when we say "multiple," we mean quite a lot.

A case in point? Nvidia's newly announced Eos supercomputer, which connects 18 DGX SuperPOD clusters that consist of a total of 576 DGX H100s. The company said this new supercomputer can provide 18 exaflops of FP8, 9 exaflops of FP16 and 275 petaflops of FP64.

When the H100 launches in the third quarter, it will be available in three form factors. The first, SXM, will enable the faster performance for AI training and performance, but it will only be available in servers that use Nvidia's HGX 100 server boards. The second form factor is a PCIe card for mainstream servers, which uses NVLink to connect two GPUs and provide 7x more bandwidth than PCIe Gen5 connectivity, Nvidia said.

The third option is the H100 CNX, a PCIe card that brings the H100 together with a ConnectX-7 SmartNIC from Nvidia's Mellanox acquisition for workloads that need high throughput, such as multi-node AI training for businesses or 5G signal processing in edge environments. ®